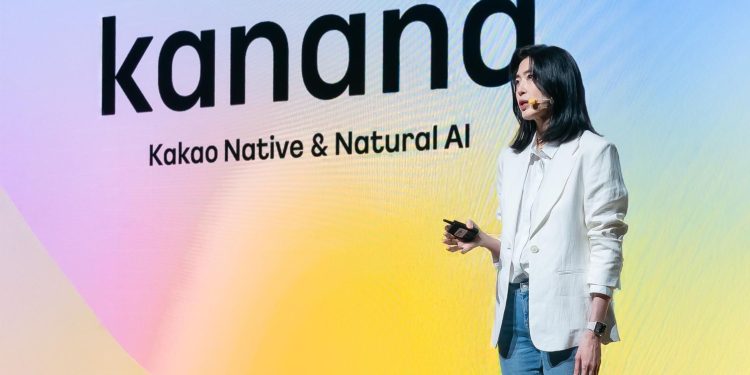

Kakao introduced Kanana-o, South Korea’s first multimodal large language model (LLM), capable of understanding and processing text, voice, and images together. The company shared details of the model’s performance and development through its official tech blog on the 1st, highlighting its ability to listen, speak, and interact human-likely by integrating advanced voice technology.

According to Kakao, Kanana-o demonstrates a competitive edge on par with global AI systems developed by OpenAI and Google. The company also unveiled Kanana-a, an audio-focused language model.

Kanana-o, an artificial intelligence model, integrates the understanding and processing of text, voice, and images simultaneously. This multimodal system allows users to input queries in any combination of the three forms of communication, with the model generating responses in text or natural voice tailored to the context. Kakao achieved this integration by merging its existing models, Kana-v, which specializes in image processing, and Kana-a, which focuses on audio understanding and generation, using a technology called “Model Merge.”

The development of Kanana-o leverages a large-scale Korean dataset to accurately reflect the distinctive features of the Korean language, including its tense variations, intonation and unique speech structure. The model also has the ability to interpret regional dialects, such as those from Gyeongsang and Jeju provinces, converting them into standard Korean and producing natural, fluent speech. This ensures the model can effectively handle nuanced Korean communication and regional linguistic differences.

One of the standout features of Kanana-o is its use of speech emotion recognition technology, which enables the model to analyze nonverbal signals such as intonation, voice trembling, and speech patterns accurately. This helps the system understand and interpret user emotions, allowing it to generate responses that are contextually relevant and emotionally appropriate. This capability brings the model closer to mimicking human communication, making interactions with the AI more natural and empathetic.

In performance tests, Kanana-o showed a high level of competence, comparable to global models like OpenAI’s GPT-4 and Google’s Gemini 1.5 Pro, especially in tasks related to the Korean language. The model excelled in emotional recognition, outperforming its international counterparts in both Korean and English. This achievement indicates the potential of Kanana-o to revolutionize AI communication by recognizing and responding to human emotions, a significant step in the evolution of multimodal AI models.

Kakao aims to further enhance Kanana-o’s capabilities by refining its speech synthesis technology, with plans for the development of a Korean voice tokenizer for continuous performance improvement. The company’s goal is to establish a strong competitive presence in the AI industry, building on its unique multimodal technology. By sharing its research and contributing to the development of Korea’s AI ecosystem.

Kakao plans to advance Kanana-o by focusing on three key areas: enabling multi-turn conversations, enhancing the model’s ability to handle simultaneous two-way data, and improving safety features to prevent inappropriate outputs. These efforts aim to deliver more natural and fluid interactions in voice-based conversation environments, bringing AI communication closer to real-life dialogue.

Kim Byung-hak, head of performance at Kakao Kanana, emphasized that the Kana model is evolving into an AI that processes text and sees, hears, speaks, and empathizes like a human. He added that Kakao will continue to build on its proprietary multimodal technology to strengthen its AI competitiveness while actively contributing to the growth of South Korea’s AI ecosystem through open research and development.